The AI generalist is, in a sense, a very old idea wearing a new name. The Renaissance polymath. The Victorian natural philosopher who studied botany on Monday and economics on Thursday. The early internet era “webmaster” who could write copy, build the site, run the server, and handle the client call. Every generation of technological disruption produces a window — usually brief — where the person who can bridge multiple domains earns a premium over the person who masters only one. We are in that window right now, and it is closing faster than most people realize.

The specialization argument made sense for the conditions that produced it. When tools were stable for decades, depth paid off. Master the tool; the tool doesn’t change. What AI has done is collapse the useful lifespan of narrow technical skills from decades to years to, in some cases, months. A junior developer who spent three years mastering boilerplate code found in 2025 that agentic AI systems could write, debug, and deploy that code faster than they could. The 10 years of depth didn’t disappear — it just stopped being scarce. And compensation follows scarcity, not effort.

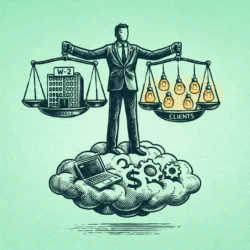

The honest version of the AI generalist argument isn’t that deep expertise is worthless. It’s that single-domain expertise is no longer sufficient protection, and that the connective tissue between domains — the ability to move fluidly from a business problem to a technical solution to a communication challenge and back — is what AI cannot currently replicate. LinkedIn’s 2025 Jobs on the Rise report found that listings for AI integration and AI operations roles grew 25% year-over-year, while specialist roles in copywriting, translation, and basic financial analysis declined. The market is not rewarding breadth instead of depth. It’s rewarding breadth that contains multiple depths — what some researchers are now calling the M-shaped skill profile.

What the Role Actually Involves

The cleanest way to understand what an AI generalist does is through what they’re not doing. They are not the person executing the task. They are the person who determines which tool executes which task, sequences the workflow, evaluates the output for business fit, and catches the errors that surface when AI systems are asked to do things slightly outside their training. The term “human middleware” has started appearing in job descriptions at growth-stage companies, and it captures the function accurately if not elegantly. The middleware doesn’t do the computing. It routes, translates, and error-corrects between systems that don’t naturally speak to each other.

In practice, this looks like: an operator who understands enough about API logic to connect a research tool to a drafting tool to a distribution tool without writing custom code, and enough about the business to know when the output is confidently wrong rather than obviously wrong. The confidently-wrong problem is the one that requires human judgment. AI systems don’t flag their own hallucinations with useful frequency. Someone with enough domain knowledge across multiple fields to recognize when a generated output contradicts something they know from an adjacent field — that person catches errors that a narrow specialist misses because the error lives outside their specialty.

The salary data reflects how much companies currently value this. AI Product Manager roles in the US market are ranging from $165,000 to $240,000 — a combination of technical literacy and user empathy that neither a pure engineer nor a pure product person typically carries. Chief of Staff roles at technology companies, which require operational depth, strategic fluency, and now AI workflow management, are running $150,000 to $210,000. Growth Operator roles — requiring data analysis, marketing instinct, and automation tooling — command $140,000 to $200,000. These aren’t exotic job titles. They’re variations on roles that have existed for years, now substantially redefined by the expectation that the person filling them can also build and manage the AI systems their team depends on.

The skill stack that produces this profile has four components, and none of them require a computer science degree. Technical literacy at the level of understanding API logic, context windows, and basic JSON is sufficient to connect tools and debug workflows — this is not software engineering, it’s plumbing. Business logic means understanding unit economics and P&L well enough to filter the AI’s infinite output for what’s actually profitable. Human EQ — negotiation, storytelling, ethical judgment — remains the genuine moat, because the capability AI most conspicuously lacks is reading a room. And orchestration: the ability to chain tools through platforms like Zapier, Make, or n8n is what the original “can you code?” question has evolved into. Connecting existing systems intelligently is now more valuable than building new ones from scratch.

How to Build This Without Starting Over

The transition from specialist to AI generalist doesn’t require quitting and enrolling in a bootcamp. It requires a specific kind of attention applied to your current work. Start by identifying one expensive, repetitive problem in your existing role — something that takes four hours a week and follows a predictable pattern. Map the manual steps. Calculate the time cost. Then build a prototype that automates it, using no-code tools if you have no coding background. The prototype doesn’t have to be elegant. It has to work well enough to demonstrate the ROI. That demonstration is the actual skill-building: the process of translating a business problem into a technical solution and presenting the result to someone who controls budget.

This is also how you become visible as an AI generalist internally, which matters as much as the skill itself. Structural job security in 2026 comes from being the person whose departure would break something critical. For the AI generalist, that means being the person who built the workflow everyone else relies on, understands the system prompt that makes the output usable, and knows which tool to swap when the original stops working. The personal AI chief of staff model — where you architect a system of tools that handles your administrative and research load — is worth building for your own productivity as much as for the professional signal it sends.

The window for this transition is real but not infinite. Skills-based hiring is accelerating the pace at which credentials matter less than demonstrated capability — which is good news for people building new skills mid-career and bad news for anyone waiting for a formal certification to validate the move. The companies currently paying $200,000 for AI generalists are doing so because supply is thin. As every business school starts graduating people who learned prompt engineering alongside accounting, the premium will compress. The advantage belongs to people who built the capability before it became curriculum. The Renaissance window is open. It rewards the person who walks through it before the course catalog catches up. For senior professionals especially, the AI generalist skill set maps directly onto the fractional and consulting market — the ability to deliver cross-functional results without a full-time headcount is precisely what growth-stage companies are paying fractional operators to do.

Join The Global Frame

Money, work, and tech — one read every Saturday that actually changes how you think.