I’ll be honest — I spent most of 2023 genuinely impressed by ChatGPT. The ability to ask a question and get a coherent, researched answer in seconds felt like a meaningful shift. I used it constantly. I still do, in certain contexts.

But somewhere around mid-2025, I started noticing something. Every interaction ended the same way. I’d get a response, copy it, paste it somewhere, edit it, then go do the actual thing myself. The AI did the thinking. I did the doing. And the doing — the scheduling, the sending, the updating, the following up — was still entirely my problem.

That’s not a workflow. That’s a very fast research assistant that stops working the moment you close the tab.

What’s replacing it is a genuinely different category of technology. Not an upgrade to chatbots — a replacement for the entire model. And the companies that understood this distinction early are already operating in a way that makes everyone else look like they’re doing things manually. Which, increasingly, they are.

The Fundamental Problem With Every Chatbot Ever Built

Here’s the thing about chatbots that nobody really said plainly: they were designed to be read-only.

A chatbot consumes your question, generates output, and hands it back to you. The conversation ends there. You still have to do something with what it produced. Copy the draft, send the email, update the spreadsheet, book the meeting, file the ticket. Every single interaction puts you back in the loop as the last step — the human who has to execute what the AI just described.

That architecture made sense when AI couldn’t reliably take actions in the world. When the risk of an AI doing something wrong was higher than the cost of a human doing it manually. But that calculus has shifted, and the agentic AI market — projected to hit $22.1 billion by 2026 — is the financial expression of companies realizing the bottleneck was never the AI’s intelligence. It was the AI’s inability to actually finish anything.

Agentic AI doesn’t generate output and wait. It pursues a goal across multiple steps, multiple systems, and multiple decisions — and it reports back when the work is done, not when the draft is ready for you to act on.

The difference sounds incremental when you describe it abstractly. The real-world numbers make it concrete.

What This Looks Like When It’s Actually Working

Hewlett Packard Enterprise built an internal AI agent called Alfred to handle performance reviews. Before Alfred, the process consumed 40+ hours per quarter from team leads — pulling data from ERPs and CRMs, running SQL queries, building visualizations, translating numbers into narrative summaries. It was the kind of work that was important enough to do carefully but tedious enough that it always got deprioritized.

Join The Global Frame

Money, work, and tech — one read every Saturday that actually changes how you think.

Alfred runs four specialized agents in sequence: one that parses the request, one that pulls data from the warehouse, one that builds the charts, and one that writes the report. The result was a reduction from 40 hours to 4. Not 40 hours done faster — 40 hours of human time replaced by 4 hours of review and judgment calls that actually required a person.

ContraForce’s security platform is the more dramatic case. Security incident response used to cost their clients over $100 per incident in human labor — triage, assessment, playbook execution, documentation. Their agentic system handles 80% of incidents end-to-end, at under $1 each. The agent decides whether to escalate to a human. Most of the time, it doesn’t need to.

I’ve been tracking these enterprise deployments for about a year now, and the pattern that keeps emerging is the same one. The wins aren’t coming from AI that’s smarter at answering questions. They’re coming from AI that’s been given permission to actually complete something.

The Architecture That Makes This Possible

The reason agentic AI works differently isn’t magic — it’s a structural change in how the system is designed to operate.

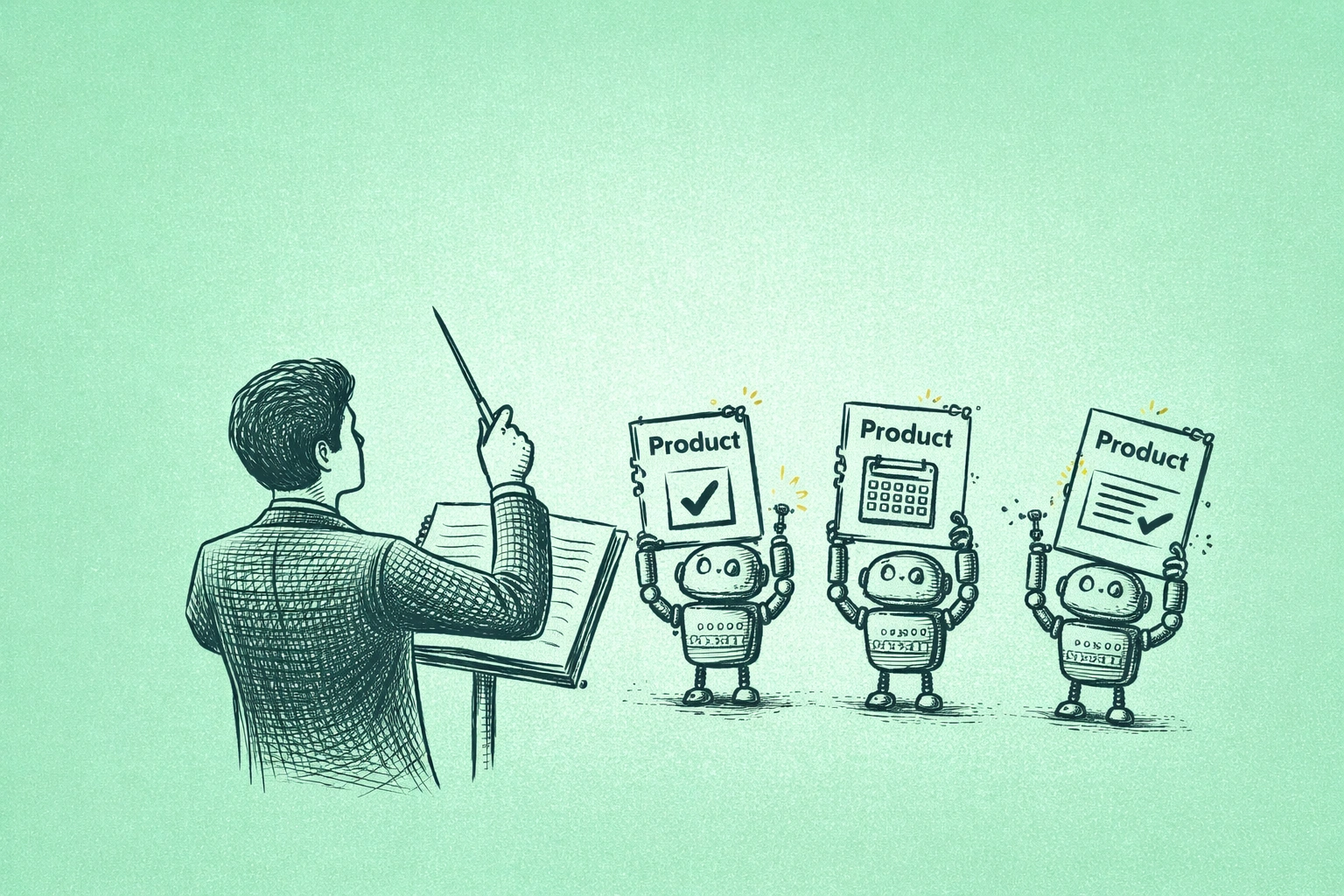

Chatbots follow a loop: you ask, it responds, you act. Agents follow a different loop: you state a goal, the agent plans, executes across whatever tools and systems are relevant, observes what happened, adjusts, and continues until the goal is reached or it determines it needs human input.

That last part matters. The best agentic systems don’t just blindly execute — they have judgment about when to stop and ask. A well-designed agent handling customer refunds, for example, can process refunds within a defined approval limit, log the interaction in the CRM, and schedule a follow-up automatically. But when a situation exceeds that limit or falls outside its defined parameters, it escalates with full context already assembled. The human who gets that escalation isn’t starting from scratch. They’re making one decision on a case that’s already been analyzed.

This is what McKinsey means when they describe 40% of enterprises relying on AI agents for core operations by 2026. Not AI that assists with core operations. AI that runs them, with humans supervising and handling exceptions.

The Skill Shift Nobody Is Preparing For

If you’ve been thinking about AI in terms of prompting — getting better at asking questions, crafting the right inputs — that skill is becoming less relevant faster than most people realize.

The people creating disproportionate leverage right now aren’t better prompt writers. They’re the ones who have figured out how to orchestrate agents toward a goal. How to break a workflow down into steps that agents can own, define the boundaries of autonomous action versus human judgment, and build the feedback loops that make the system improve over time.

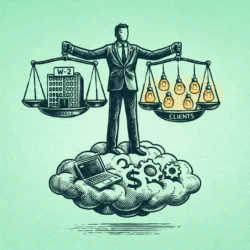

I think about this a lot in the context of solopreneur operations. A solo operator who can deploy a research agent, a drafting agent, a scheduling agent, and a follow-up agent — and who spends their time on the judgment calls and strategy those agents surface — is not competing with other solo operators. They’re competing with small teams. The productivity gap isn’t 20%. It’s the difference between what one person can execute and what five people can.

The AI generalist concept is relevant here. Not someone who knows one AI tool deeply, but someone who can move fluidly across systems, understand how to wire them together, and manage outputs without needing to be in the weeds of every execution. That combination — strategic judgment plus operational fluency with AI systems — is what the labor market is starting to price at a premium, and the gap between people who have it and people who don’t is widening quickly.

Where to Actually Start

The enterprise examples are instructive but they can feel distant if you’re not running a security operations center or a Fortune 500 performance review process. The practical question is where to start building this capability now, with tools that exist and don’t require an engineering team to deploy.

For individual workflows, Cursor is the clearest example of agentic capability that a non-engineer can understand immediately — it doesn’t just write code, it writes across multiple files, runs tests, and fixes what breaks. Motion applies the same logic to scheduling: not a calendar tool, but a system that continuously re-optimizes your entire week as conditions change, without you having to touch it. I’ve written about building a full AI chief of staff protocol from these kinds of tools — the short version is that the value compounds significantly once individual agents start connecting to each other.

For teams, Salesforce Agentforce and Microsoft’s Copilot autonomous edition are where most enterprise agentic deployment is happening right now. The constraint isn’t availability — it’s organizational willingness to actually redesign workflows around what agents can own versus what requires humans. Most companies are still treating these tools like faster chatbots rather than process replacements. The ones that made the conceptual shift first are the ones showing up in the case studies.

The practical starting point is identifying one workflow in your current operation that is repetitive, rules-based, and currently dependent on human execution for no particularly good reason. Not because it requires judgment — because nobody got around to automating it. Build an agent for that one thing. Learn what breaks. Then expand.

The Question Worth Sitting With

90% of enterprise AI use cases are stuck in pilot mode, according to McKinsey’s research. The reason cited consistently isn’t that the technology doesn’t work. It’s that chatbot-era tools can speed up individual tasks but can’t eliminate the human coordination required to connect those tasks into a completed workflow. Agents change that equation — but only for organizations willing to redesign the workflow rather than just adding AI to the existing one.

That’s a more demanding ask than it sounds. It means deciding what humans should stop doing, which is a harder conversation than deciding what AI should start doing.

The skills that create real job security in this environment are shifting accordingly. The question used to be whether you could do your job well. Increasingly it’s whether you understand which parts of your job should be yours at all — and whether you’re building the judgment and orchestration capability to operate at the level above the work that agents are taking over.

The chatbot era taught us that AI could think alongside us. What’s becoming clear now is that it was always capable of more than that, and the constraint was architectural, not intellectual. Agentic AI isn’t smarter than ChatGPT in any meaningful sense. It’s just finally allowed to finish what it starts.

That turns out to be almost everything.