A researcher at a cybersecurity firm in London ran an experiment last year that I haven’t been able to stop thinking about. She took three seconds of audio from her mother’s voicemail greeting — just a “Hi, you’ve reached Margaret, leave a message” — fed it into a commercially available voice cloning tool, and within minutes had a synthetic version of her mother’s voice that could say anything she typed.

She then called her brother. Played the clone. Asked him a question.

He answered it. Naturally. As if he were talking to his mother.

He didn’t hesitate. He didn’t ask anything. He just talked.

That experiment wasn’t conducted with military-grade AI or stolen research. The tool she used costs less than a streaming subscription. The three seconds of audio came from a public voicemail. The whole setup took about twenty minutes.

This is where we are in 2026. And most people have no idea.

How Fast This Moved

It’s worth understanding the actual technical shift here, because the speed of it is part of what makes the threat so disorienting.

Five years ago, cloning a voice convincingly required hours of high-quality audio. The person being cloned had to read specific training sentences. The process was expensive, slow, and produced results that most people could identify as artificial on a careful listen.

Then zero-shot voice synthesis arrived. Microsoft’s VALL-E, released in 2023, demonstrated that three seconds of audio was sufficient to produce a convincing clone. Three seconds. The length of time it takes to say “Hello, who is this?” when you answer an unknown number.

That threshold has only dropped since. The open-source versions of this technology — the ones anyone can download and run — have improved continuously. What required a research lab in 2022 runs on a laptop in 2026.

Join The Global Frame

Money, work, and tech — one read every Saturday that actually changes how you think.

The audio sources scammers use don’t require any special access. A birthday video on Instagram. A TikTok where you spoke in the background. A voicemail greeting. A previous spam call where you said “hello” before hanging up. The AI analyzes the waveform, the cadence, the emotional register, and constructs a voice model that can then speak any text typed into a keyboard — in real time, during a live call.

I started paying attention to this after reading the FTC’s 2024 data, which showed voice cloning scams had surged past $25 million in reported losses in a single year — and that’s only what got reported. The actual number is almost certainly a multiple of that.

The Specific Scenario You Need to Understand

The scam that’s doing the most damage is called virtual kidnapping, and the name undersells how psychologically brutal it is.

Here’s the version that plays out most commonly. It’s 2pm on a Tuesday. You’re at work. Your phone shows your daughter’s name. You pick up.

What you hear first isn’t a voice. It’s crying. The specific, gasping, trying-to-hold-it-together sound of someone genuinely terrified. And it sounds exactly like her — the pitch, the way she says your name, the particular rhythm of how she cries.

Then a man’s voice. Low. Controlled. “I have your daughter. You’re going to transfer money. If you hang up, she gets hurt.”

In the background, you can still hear her.

Your daughter is fine. She’s at her apartment, making lunch, completely unaware this is happening. The crying is a cloned audio loop. The scammer is running a voice synthesizer on a laptop. But in that moment, that distinction is neurologically inaccessible to you — because your brain isn’t in a state where it can make that distinction.

This is not a failure of intelligence. It’s basic biology.

Why Your Brain Cannot Protect You Here

The reason these scams convert is not that people are gullible. It’s that the technology has specifically targeted the part of our threat-response system that bypasses rational evaluation.

When you hear a loved one in distress — particularly a sound that’s been part of your sensory experience for years — the amygdala activates immediately. Fear response. The prefrontal cortex, where you’d run the logical evaluation of “wait, is this real?”, gets functionally suppressed. Evolution designed this sequence for physical threats. Spend time evaluating whether the lion is actually dangerous and you’re already dead. Act first, analyze later.

Security researchers who’ve tested this on families found that in blind tests, people cannot reliably distinguish a modern AI voice clone of a family member from the real thing over a cellular connection. And cellular connections already compress and degrade audio in ways that mask exactly the artifacts that might otherwise give a clone away.

The scammers know this. The emotional escalation — the crying, the panic, the urgency, the “don’t hang up or she gets hurt” — isn’t incidental to the scam. It’s the mechanism. It’s deliberately designed to keep the amygdala in control and the prefrontal cortex offline for long enough to get a bank transfer authorized.

You cannot out-logic your way through an activated fear response in real time. Which is why the only effective defense has to be built before the call happens.

Voice Biometrics Are Now a Liability

Before getting to the defense, one immediate action item that’s easy to overlook.

If you have voice verification enabled on any sensitive account — your bank, your telecommunications provider, any government service — disable it now and replace it with an authenticator app or a hardware security key.

“My voice is my password” was a reasonable security idea when voice synthesis was expensive and difficult. In 2026 it’s an attack surface. A cloned voice can call your bank, pass the voice verification check, and authorize transfers. Banks including HSBC and Chase have acknowledged this vulnerability. Some have already moved away from voice biometrics as a primary authentication factor.

A YubiKey hardware token or an authenticator app like Google Authenticator requires physical possession of a device. It cannot be cloned from a three-second audio sample. Switch to one of these for anything with financial access.

The Only Defense That Actually Works

AI detection tools exist. They’re unreliable and too slow for a live phone call. By the time any software analyzes whether the voice you’re hearing is synthetic, you’ve already been in the conversation long enough for the fear response to take hold.

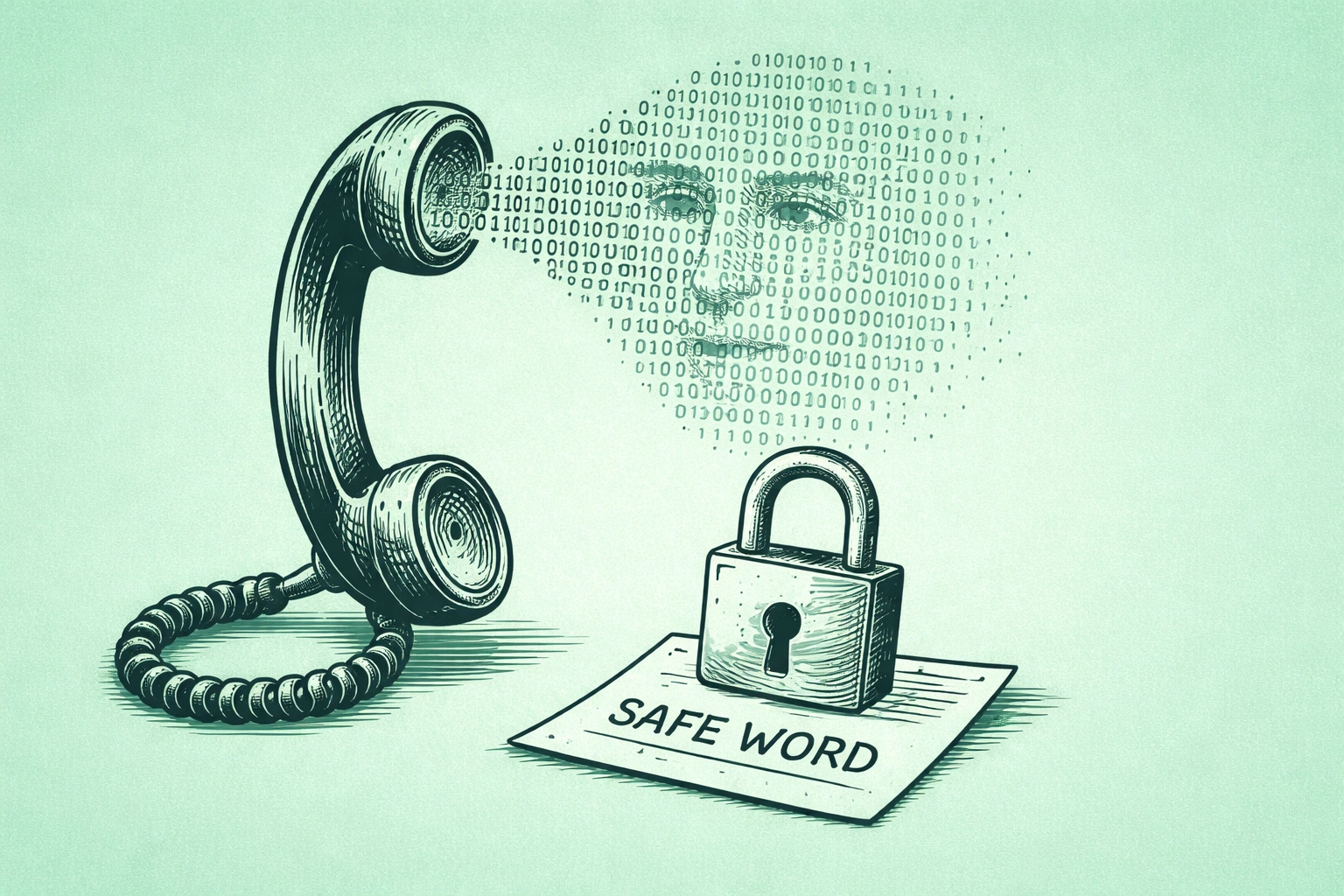

The defense that works is entirely analog, and you can implement it tonight.

It’s a family safe word. A specific word or short phrase that every member of your immediate family knows, that you’ve never used in normal conversation, and that exists nowhere online. Not the dog’s name. Not a street you grew up on. Not anything that could be inferred from your social media history. Something random — “violet harbor” or “ceramic lamp” or whatever two words you land on that have no obvious connection to your life.

The protocol is simple: if you ever receive a call asking for emergency help or money — no matter how real the voice sounds, no matter how frightened they sound — before you do anything else, you ask for the safe word. If they can’t give it, you hang up and call the real person directly.

The scammer has your voice. They have your history. They can synthesize your emotional responses. They don’t have the word you said quietly at a dinner table. That word is the one piece of information that lives only in physical, offline memory — and it’s the one thing the technology cannot access.

When you set this up, have the actual conversation with the people in your family. Explain why it exists. Practice the scenario once so that asking for the safe word under pressure feels like a natural reflex rather than an awkward interruption. The discomfort of that conversation is trivial compared to what it’s protecting against.

What to Do If the Call Is Happening Right Now

If you didn’t have a safe word in place and you’re receiving a call that matches this pattern, two things work.

The first is the callback maneuver. Hang up immediately — regardless of what they say will happen if you do — and call the person directly on their actual number. Then check their location on Find My iPhone or Google Maps if you’re connected. If they answer their phone normally or their location shows them at home, you have your answer. The instruction not to hang up is the scam’s most important mechanism. Ignore it.

The second, if you stay on the line, is an offline memory question. Not their birthday. Not the dog’s name. Not anything that appears anywhere on the internet. “What did we eat for dinner on Sunday?” “What color is the couch in the living room?” “What was the last movie we watched together?” These questions ask for information that exists only in shared physical experience. A voice model trained on audio samples cannot answer them. The AI knows what your family member sounds like. It doesn’t know what your living room looks like.

One more thing worth knowing: some calls are purely fishing expeditions. A call where you hear silence when you pick up, or a generic “hello?” before you’ve said anything, is sometimes designed to record your voice for use in a future scam targeting your family members. If you pick up and hear nothing, or hear an ambiguous prompt, hang up without speaking. “Yes” and “Who is this?” are exactly the samples being collected.

The Conversation Worth Having This Week

I’ve been thinking about digital privacy a lot in the context of what we share publicly without thinking about it — the data that banks collect, the information smart devices record, the profiles that get built from ordinary online activity. Most of that risk is abstract and slow-moving. This one is immediate and personal in a way that’s different.

The scammers using this technology are specifically targeting the people you love most and the neurological responses that are hardest to override. That’s a calculated choice. They’ve figured out that your password manager and your two-factor authentication protect your accounts effectively, so they went around all of it by targeting your family instead of your credentials.

The defense is a ten-minute conversation and a two-word phrase.

Have the conversation. Pick the phrase. The technology exists and it works and it’s available to anyone willing to pay for a cheap subscription. The only variable still in your control is whether the people you’d do anything to protect know what to say when someone calls them using your voice.